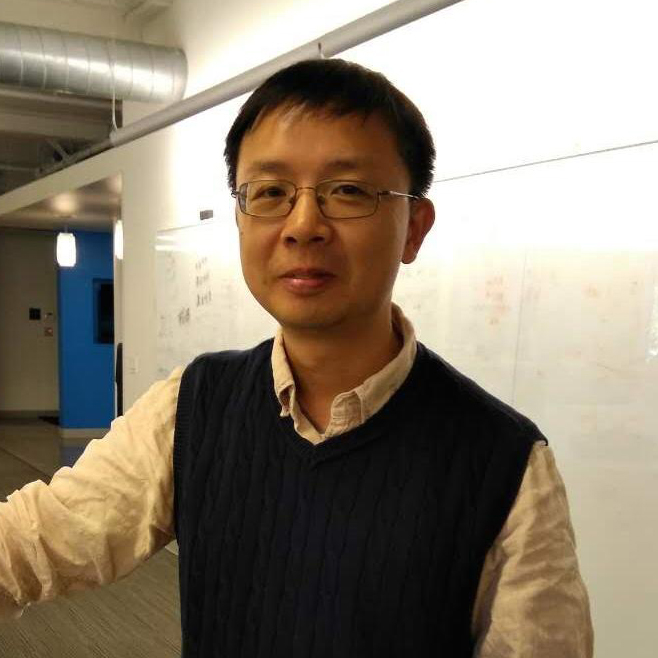

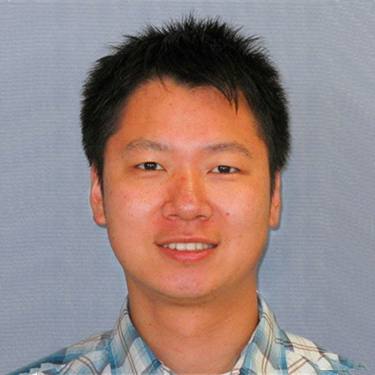

专题演讲嘉宾 :Siddharth Singh

LinkedIn Engineering Manager

Siddharth Singh currently works as an Engineering Manager in the data infrastructure group at LinkedIn where he is responsible for Voldemort and Venice projects. Previously at LinkedIn, he worked as an engineer where he architected and built Voldemort's multi data center strategy. Before LinkedIn, Siddharth worked on the core MongoDB database where he contributed to replication and sharding technology. Siddharth as a MS from Purdue University with a focus on distributed database systems.

时间:04月17日 10:50

时间:04月17日 10:50 地点:309A

地点:309A